How to Save Scraped Data to CSV & Excel – Python Web Scraping

How to Save Scraped Data to CSV & Excel - Python Web Scraping

Send download link to:

Once we have scraped data from any website it is important to save it so that we can access it for future use, or do some analysis or clean it. Scraping data and merely displaying on the screen doesn’t make any sense.

When dealing with text data we can save it in multiple ways like in a CSV file, Excel file, Text file, Google Spreadsheet in a Database like MySQL, MongoDB etc. For Images, Videos, Audios and other kind of media we can save it on our local system or in a database.

Saving data to a CSV or Excel file is the most common and most used method for text data as it is easy to do, can be accessed easily and shared. In this tutorial we will see how to save text data to CSV and Excel files.

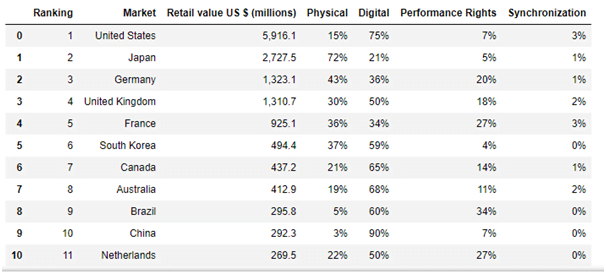

For this tutorial we will go to https://en.wikipedia.org/wiki/List_of_largest_recorded_music_markets and scrape tabular data to save it first to CSV file then to Excel. This Wikipedia page contains lists of Largest recorded music markets from 2004 to 2017. We will scrape data for the year 2017.

There are two ways to save data to CSV and Excel

1. Using CSV module

2. Using Pandas Dataframe.

I personally prefer to use pandas data frame as it is much easier to use and we can instantly visualize and clean the data using it. However we will be learning both the methods in this tutorial.

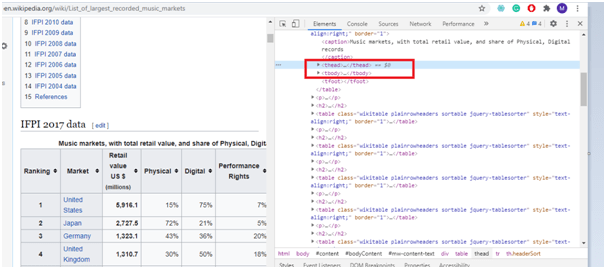

First let’s go to the webpage and inspect the data we want to scrape:

To grab the data in IFPI 2017 Data table, which is a tabular data.

We can see name of columns is under theadtag and rest of the data is under tbody tag.So using these two tags and looping we can scrap the data.

Below is the detailed code for scraping and storing data to CSV and Excel files. For details watch the video:

import bs4

import urllib.request

from urllib.request import urlopen

from bs4 import BeautifulSoup as soup

import csv

filename = 'music.csv'

f = open(filename,'w',newline = '')

music = csv.writer(f)

html = urlopen('https://en.wikipedia.org/wiki/List_of_largest_recorded_music_markets')

bsobj = soup(html.read())

tbody = bsobj('table',{'class':'wikitableplainrowheaders sortable'})[0].findAll('tr')

xl = []

for row in tbody:

cols = row.findChildren(recursive = False)

cols = [element.text.strip() for element in cols]

music.writerow(cols) #Writing to CSV

xl.append(cols)

import pandas as pd

df = pd.DataFrame(data = xl[1:],columns = xl[0])

df.to_excel('world_music.xlsx', index=False,header = False)#Writing to Excel file

Output as pandas data frame:

Learn more how to save data in to MySQL database.